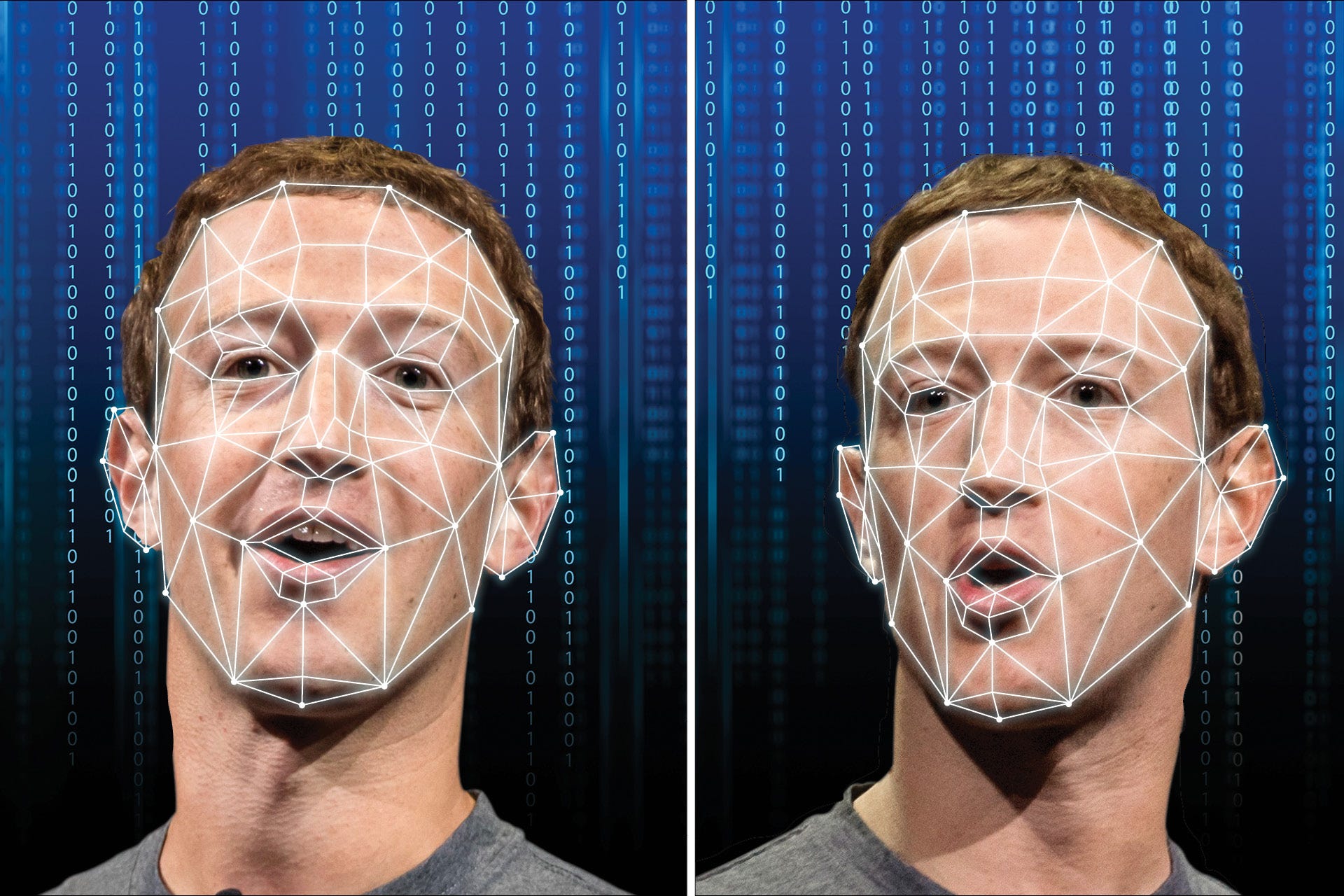

If you have watched a video of Barack Obama calling Donald Trump “A complete dipsh*t,” or one of Mark Zuckerberg bragging about how he has complete control of stolen data belonging to billions of people, then you have seen a Deepfake.

Deapfakes are the 21st century’s answer to Photoshop by the use of artificial intelligence that makes images and fake events appear real. If you wish to put words in the mouth of a politician or even star in your very own movie, where you dance like a pro, then you need to get yourself Deepfake.

Most of them are… well… pornographic, and there are many of them online. The AI firm that designed them – Deeptrace found more than 15,000 Deepfake videos online as of September 2019, and this was more than double what they had found just 9 months earlier.

Out of all the videos they found, 99% of them had mapped out the faces of female celebrities and had put them on porn stars. There is a likelihood that this is going to spread even further, as anyone is allowed to do what they want with the software.

Other Than Videos, What Else Can They Do?

Deepfake technology can be used to create many other things other than videos. For example, it can convincingly create photos that look real from scratch. There was a non-existent Bloomberg journalist on Twitter and LinkedIn whose account was filled with Deepfake photos.

Audio can also be Deepfaked as well, creating “Voice Clones,” and “Voice skins.” These audios could be of public figures.

How Do They Do This?

Special effects studios and university researchers have over time pushed the boundaries of what is possible with image and video manipulation.

These Deepfakes were however born in 2017 when a Reddit user with a similar name was able to doctor porn clips from a site. He swapped the faces with those of celebrities Taylor Swift, Gal Gadot, Scarlett Johansson, and many others.

Step By Step Process

It will take you just a few steps to swap faces on videos, and first you will be required to run thousands of shots of faces of the people you wish to swap through an AI algorithm called encoder.

This encoder shall find and learn similarities between the two faces you wish to swap, and it shall then reduce them so they share common features and then compress the images in the process.

The second AI called decoder shall then be taught to recover the faces from those that have been compressed. Now, because the faces are different, you will be required to train the decoder to recover the face of the first person, and then another decoder will then recover the face of the second person.

When swapping, you will need to simply feed the encoded images onto the wrong decoder.

How Can You Spot A Deepfake?

With time, and as technology keeps improving, it shall get harder and harder to spot Deepfakes. But, according to research done in 2018 by US researchers, it was discovered that Deepfake faces do not blink normally.

Well, no surprise there, most of the images will show people with very open eyes, as the algorithms do not really learn the art of blinking. With time, of course, we expect that technology will cause the images to blink.

Poor quality deepfakes however are very easy to spot. Lip-synching is very bad or you shall see the skin tone is patchy. There could also be some flickering around the edges that are transposed on the images.

The finer details such as hair are particularly hard for the deepfakes to render, and especially when there are visible strands on the fringe, it shall not look good.

Conclusion

The fact that everyone from industrial researches to amateur enthusiasts can do these deepfakes as long as they have the required software makes it such a nuisance.

However, universities, governments, and many tech firms are currently funding research that shall help people detect deepfakes, so do not worry. We just hope that they can be banned as soon as possible, or at least be easy to detect them.